Benchmarks for AI Models and Agents on CAD Tasks

Parametric CAD Bench is a comprehensive collection of benchmarks to benchmark CAD models and AI agents on CAD design and 3D modeling tasks.

A community effort to build the best open parametric CAD datasets, initiated by the gNucleus AI team.

Leaderboard

View full leaderboard →| Rank | Model | Agent | Geom Score | Spec Score | Combined | Cost (USD) |

|---|---|---|---|---|---|---|

1 | gpt-5.5 | codex | 80.8 | 93.9 | 83.2 | $170.00 |

2 | gemini-3.1-pro-preview | mini-swe-agent | 74.2 | 95.4 | 79.0 | $70.82 |

3 | gpt-5.5 | mini-swe-agent | 71.4 | 89.3 | 74.4 | $42.35 |

4 | claude-opus-4-7 | mini-swe-agent | 69.3 | 89.0 | 73.4 | $29.84 |

5 | gemini-3.1-pro-preview | gemini-cli | 68.9 | 83.9 | 72.9 | $51.28 |

6 | claude-opus-4-7 | claude-code | 62.4 | 83.3 | 65.5 | $73.25 |

7 | claude-sonnet-4-6 | claude-code | 47.8 | 68.3 | 51.8 | $96.34 |

8 | claude-haiku-4-5 | claude-code | 23.3 | 54.1 | 28.4 | $24.67 |

9 | gemini-3.1-flash-lite-preview | mini-swe-agent | 19.6 | 47.6 | 24.1 | $3.00 |

10 | gemini-3.1-flash-lite-preview | gemini-cli | 10.0 | 24.2 | 11.9 | $7.63 |

Benchmarks Tasks

Run Benchmark →3D Parametric Part Generation

Generate editable, parametric 3D CAD part models from natural-language prompts and reference inputs.

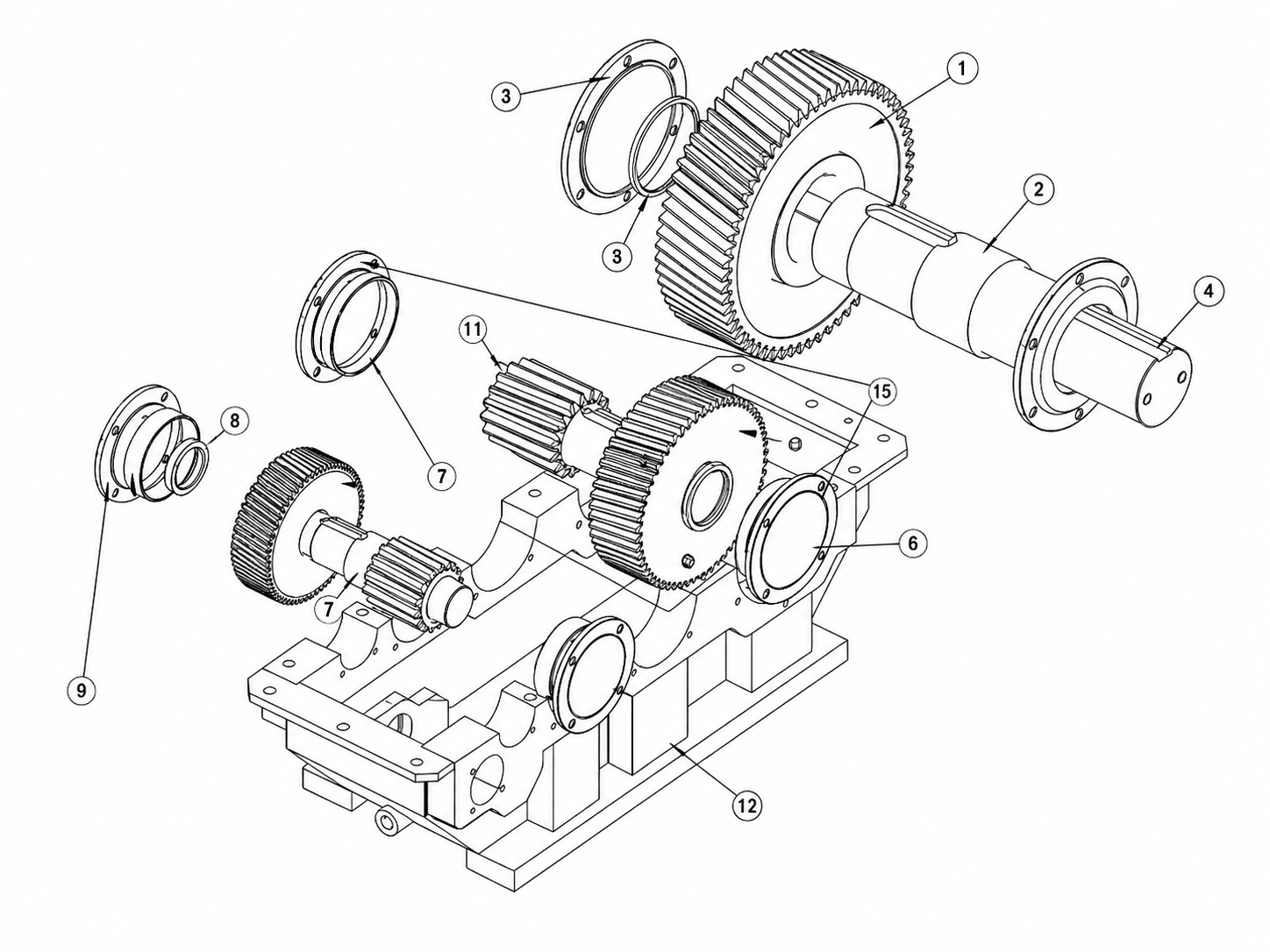

Assembly Generation

Generate multi-part assemblies with proper mates, constraints, and component hierarchy.

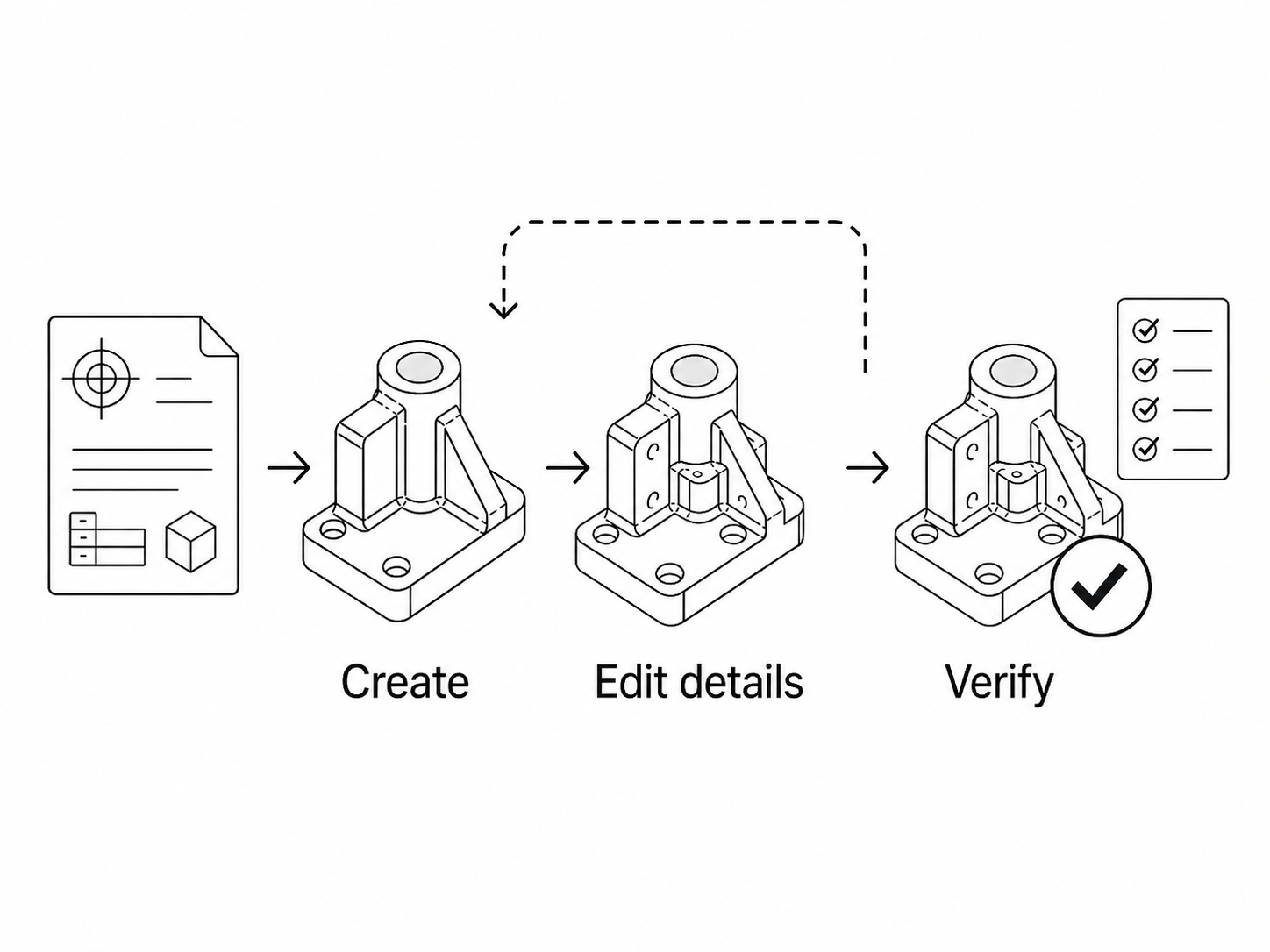

Complex CAD workflow

Multi-step CAD workflows that generate, iteratively edit, and verify designs until the model meets the target spec.

Evaluation Methods

View Evaluator →CAD evaluation should check not only visual similarity, but also whether the generated CAD is valid, accurate, rebuildable, and consistent with the design spec.

Each task is scored automatically in a sandboxed CAD environment, comparing the generated CAD against the design spec and reference CAD (ground truth) across the axes below. Scoring is deterministic — same output, same score.

Geometry Accuracy

Measures how closely the generated geometry matches the reference part.

Constraint & Assembly Correctness

Checks constraint satisfaction, mating validity, and assembly stability.

Parametric Correctness

Verifies CAD model consistency with the spec parameters.

Topology / Structure

Evaluates topological validity and part structure correctness.

Agent Workflow Success

Assesses task completion rate and workflow correctness.

Efficiency

Measures token usage, execution time, and resource efficiency.

Latest Updates

May 13, 2026

A new benchmark for AI agents that design parametric 3D mechanical parts in FreeCAD. Multi-step agentic loop scoring geometric correctness and consistency between the generated CAD and the provided spec, across 10 agent–model combinations spanning frontier vendors. Early results: GPT-5.5 via Codex leads at 0.832 with a visible harness effect — and is also the most expensive combination at $170.

May 10, 2026

A programmatic grader for evaluating AI-generated parametric FreeCAD parts — checks geometry similarity to the ground truth and CAD/spec consistency.

May 08, 2026

Open-sourced cad-gen-freecad on HuggingFace — native FreeCAD parts with parametric feature history, design specs, and renderings, aimed at accurate, parametric CAD generation from text prompt.

Dataset

Dataset